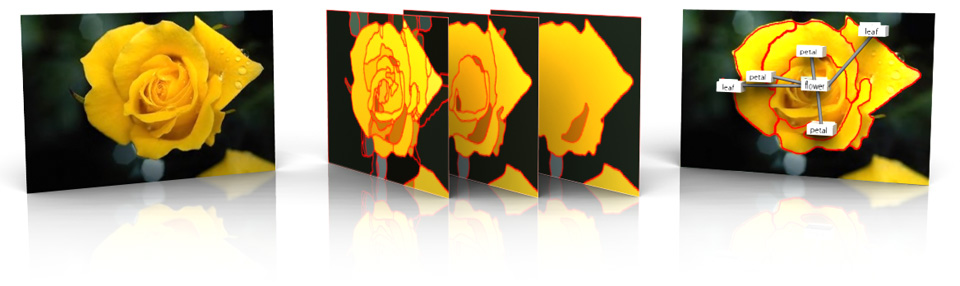

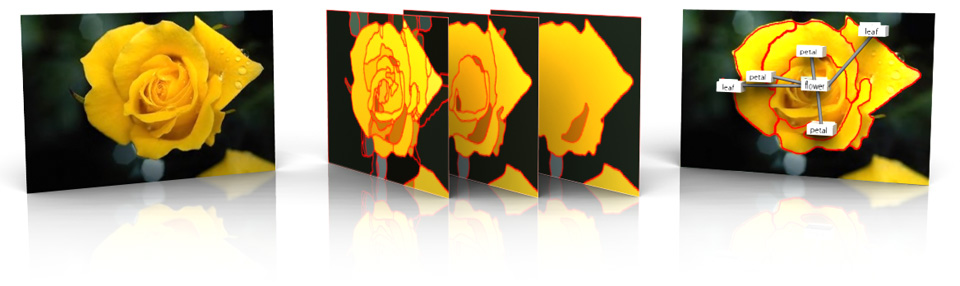

Suppose you want to effectively search through millions of images, train an algorithm to perform image and video object recognition, or research the complex patterns and relationships that exist in our visual world. A common and essential component for any of these tasks is a large annotated image dataset. However, obtaining labeled image data is a complex and tedious task that requires methods for annotating and structuring content. Therefore, we developed a comprehensive online tool and data structure, Markup SVG, that simplies the collection of annotated image data by leveraging state-of-the-art image processing techniques. As the core data structure of our tool, we adopt scalable vector graphics (SVG), an extensible and versatile language built upon XML.

As a core contribution of our framework, we wanted to standardize the visualization of several computer vision datasets with ground truth. Vision datasets have multiple formats and representations that make management and visualization of the data difficult. We also wanted to provide annotations and ground truth of other datasets that do not originally have labeled data. However, using our framework, we were easily able to collect this data through a variety of means, including manual annotation, modification of existing ground truth, and crowdsourcing.

Please note that the vision datasets and images presented here DO NOT belong to me and proper citations and attribution should be given to the original creators. ALL DATA presented here is for RESEARCH PURPOSES ONLY.

Caltech 101 Dataset

MSRC Dataset

Markup SVG - An Online Content-Aware Image Abstraction and Annotation Tool [PDF]

Supplemental Material

User Study: User study with 11 participants [PDF]

Amazon Mechanical Turk Study: MTurk study [PDF]

If you find any of this useful, please cite my paper - BibTeX